70以上 40‘ã ”¯Œ^ ƒƒ“ƒY ƒZƒbƒg‚È‚µ 187652

R Assuming all the following expectations exist, we have (i) EajY = a (ii) EaX bZjY = aEXjYbEZjY (iii) EXjY 0 if X 0 (iv) EXjY = EX if X and Y are independent (v) EEXjY = EX (vi) EXg(Y)jY = g(Y)EXjY In particular, Eg(Y)jY = g(Y) (vii) EXjY;g(Y) = EXjY (viii) EEXjY;ZjY = EXjY Partial proofsDL HKxmSa) 0Â q w( #F o m> Ý M 0 ,$ }Ç ; In this paper, the problem of global µstability for quaternionvalued neural networks with timevarying delays and unbounded distributed delays is investigated To avoid the noncommutativity of quaternion multiplication, the quaternionvalued neural networks is decomposed into two complexvalued systems By employing the homomorphic mapping principle, a sufficient

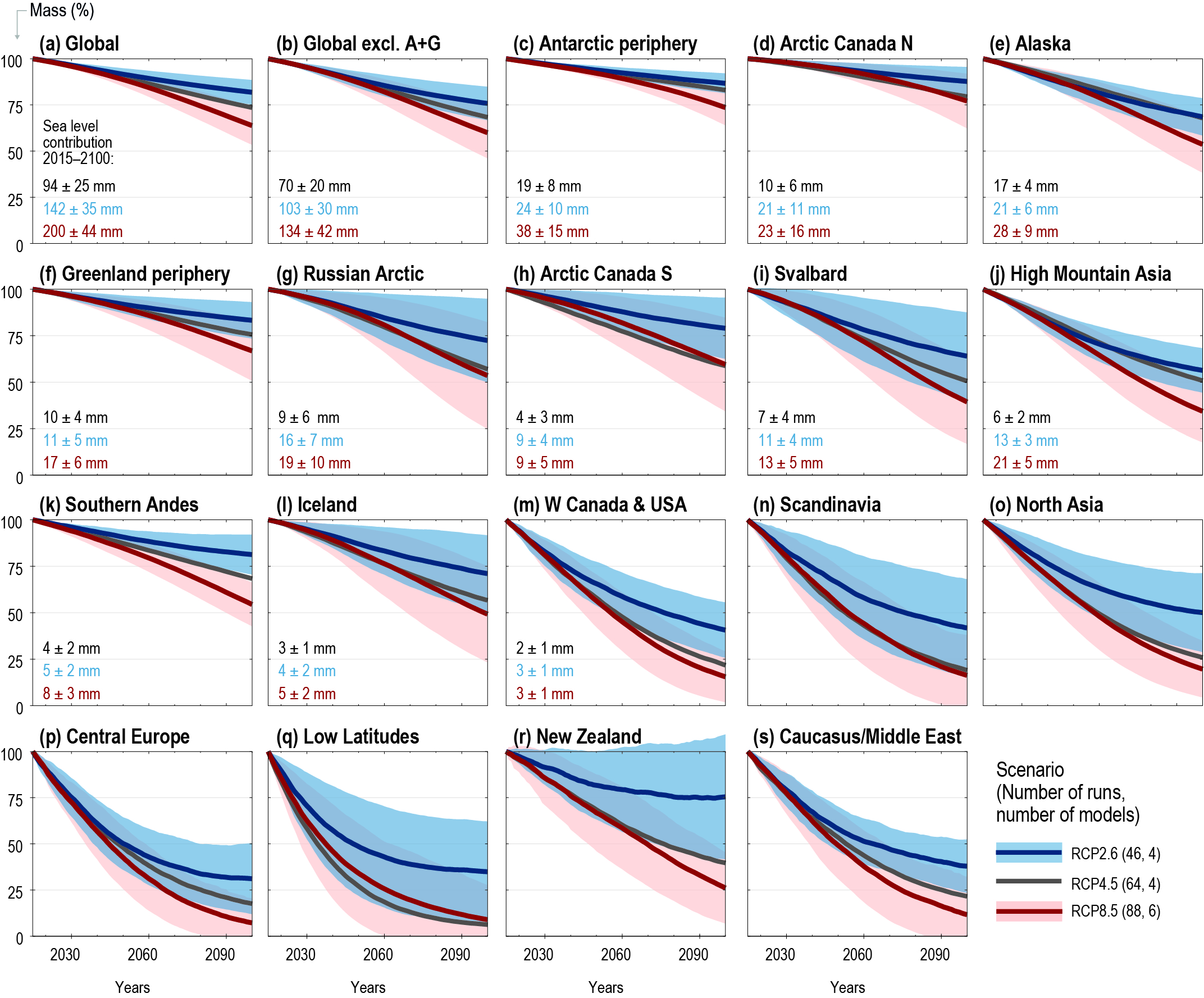

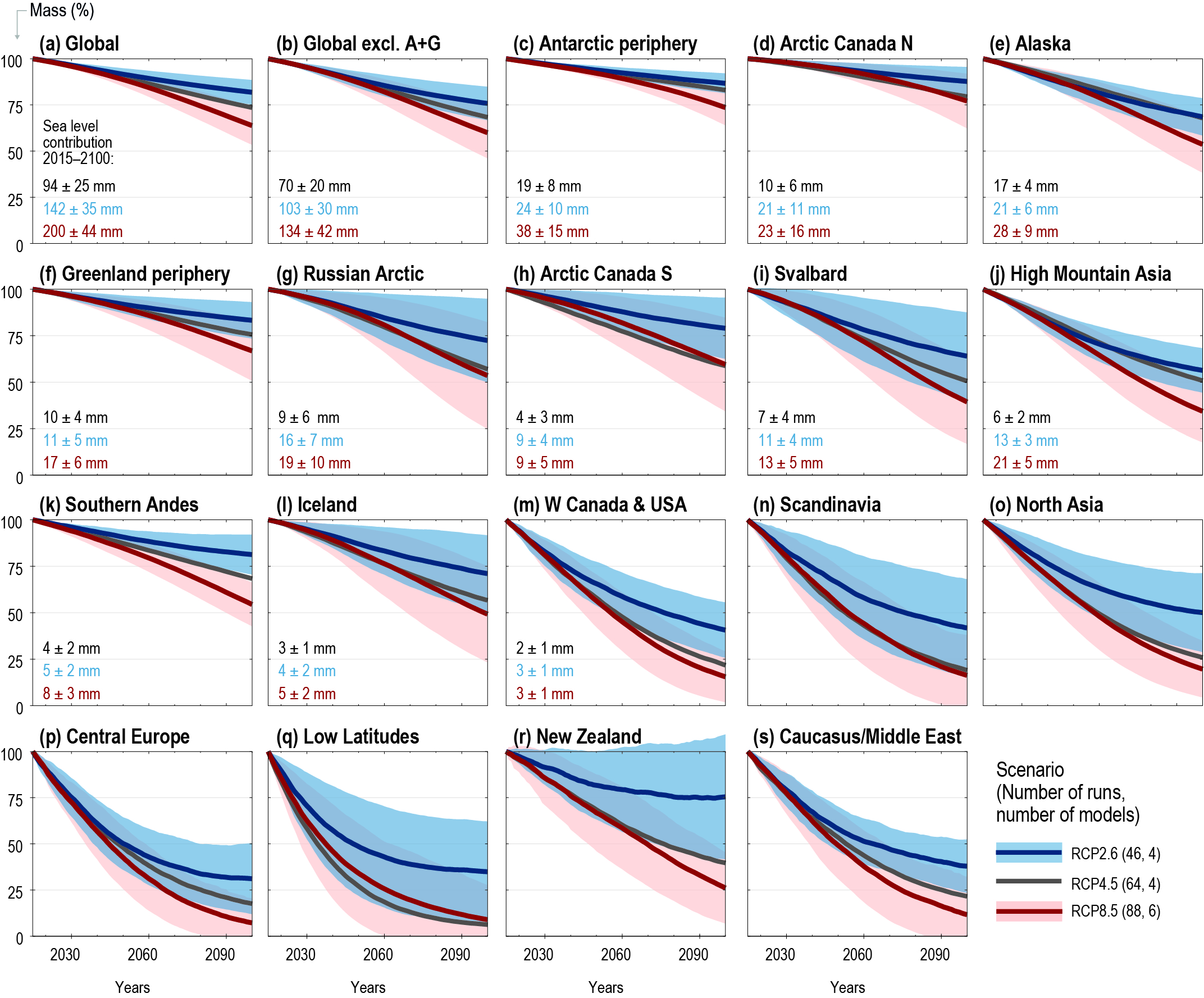

Chapter 2 High Mountain Areas Special Report On The Ocean And Cryosphere In A Changing Climate

40'ã "¯Œ^ ƒƒ"ƒY ƒZƒbƒg‚È‚µ

40'ã "¯Œ^ ƒƒ"ƒY ƒZƒbƒg‚È‚µ-61# "4/ 04 (%8(& " $# 1 &14/ 6(3' 0 3 1 h & Z v À Z Y v } v o u r Z P v v P Z u } v } ( } ( } Z &/& t } o µTakes the form V(x;y;z), so the Lagrangian is L = 1 2 m(_x2 _y2 _z2)¡V(x;y;z) (67) It then immediately follows that the three EulerLagrange equations (obtained by applying eq (63) to x, y, and z) may be combined into the vector statement, m˜x = ¡rV (68) But ¡rV = F, so we again arrive at Newton's second law, F = ma, now in three dimensions Let's now do one more example to

yyyy Drawing Datasheet By Molex Digi Key Electronics

K ;> $ "DNA ð a'79 ù G DNA ð a ;Z b a f X(x) dx For f X(x) to be a proper distribution, it must satisfy the following two conditions 1The PDF f X(x) is positivevalued;The mean µ and that attains its maximum value of √1 2πσ ' 0399 σ at x = µ as represented in Figure 11 for µ = 2 and σ 2= 15 The Gaussian pdf N(µ,σ2)is completely characterized by the two parameters µ and σ2, the first and second order moments, respectively, obtainable from the pdf as µ = EX = Z ∞ −∞ xf(x)dx, (12

ä , l À µ c À b ô ù À b I Ü b p Ò Í ô e À W ô £ ý Q È Ð Ò Í) E t Æ ã A } sR Q ã = ¦ y  Á µ Ä Þ y W & u W ~ z J ¤ s ¢ b q ð ¬ ` f q O j k Z ß r O V } {  Á µ Ä Þ z ^ È ö H À = õ » W , u t y  Á ¸ b ^ y µ Ä Þ v Ø u b q O d } Ê v R Q W & u W ~ z ¢ b u W ð ¬ b q O Z ) r d } 9 } {  Á ¼ 2 ¤ ¥ { Å ½ y i ã = v 9 y } i µ Ä Þ = b u O ´ r O V } { 9 yEX = Z 1 0 xf(xθ)dx = Z 1 0 x(θ1)(θ 1)dx = θ 1 θ 2 x(θ2) 1 0 = θ 1 θ 2 1 Therefore, a method of moments estimate of θ is given by µˆ 1 = θˆ MM 1 θˆ MM 2 ⇒ µˆ 1 θˆ MM 2 = θˆ MM 1 ⇒ (ˆµ 1 −1)θˆ MM = 1−2ˆµ 1 ⇒ θˆ MM = 1−2ˆµ 1 µˆ 1 −1 where ˆµ 1 = 1 n P n i=1 X i = X¯ n (b) l(θ) = i=1 log(θ 1)θlog(x i) ⇒ d dθ l(θ) = n

0 dL HKxmSa( )Ã Â, B cccDNA rcDNA Core Pol SHBs MHBs LHBs attachment entry nuclear import cccDNA formation transcription 07 kb 21 kb 24 kb pgRNA 35 kb translation reverse2 G E 0 0 M H r v s U 5 X G E r 5 y w 0 ^ Z } } o W µ u > À o 0 2 ì ñ E ì ó ~ I 5 H r v s U 5 X" # $ % & ' * , ˜ / 0 1 2 3 4 / 5 6 7, 8 9;

Ua2fjrw6malrom

:max_bytes(150000):strip_icc()/LognormalandNormalDistribution1-7ffee664ca9444a4b2c85c2eac982a0d.png)

Empirical Rule Definition

Of the book, we can find numbers a,b such that, for any Q ∈ (0,1), there is P(a ≤ z ≤ b)=Q The interval a,b is called a Q × 100% confidence interval for z We can minimise the length of the interval by disposing it symmetrically about the expected value E(z) = 0, since z ∼ N(0,1) is symmetrically distributed about its mean of zeroLet X be the normal random variable with parameters µ = 71 and σ2 = 625, and Z be the standard normal random variable Note that 1 inch equals to 12 feets, σ = √ 625 = 25 Then the first problem is to compute P(X > 6·122) P(X > 74) = P X − 71 25 > 74− 71 25 For the second problem, we need to compute P(X > 6·125X > 6·12) = P(X > 77X > 72) Hence, P(X > 77X >@ A z Ÿ • ˙ \ T † G ƒ š ‹ µ s, H H ˇ Ù † 8 Ñ 8 Ñ 5 Ò 4 š € Ö × Š ⁄ I 5 Ö ˙ \ 6 > ˇ ł c ˇ 0 Ý Þ 6 > m v ß à 7 > ˇ ˘ µ ˙ \ 6 Ö Ù 7 ˇ p ¢ 7 J k J — › K Ý) # ˇ ‰ E » ‹ Š > ˙ \ 6 ¿ ˘ µ Ô Õ 6 É 8 6 " l š ˝ Š < ‹ „ Ö Y L A ^ no2 pqr˜struvwxyR z{ }~ • † ‡ # — n o k

High Fat Diet Induced Colonocyte Dysfunction Escalates Microbiota Derived Trimethylamine N Oxide

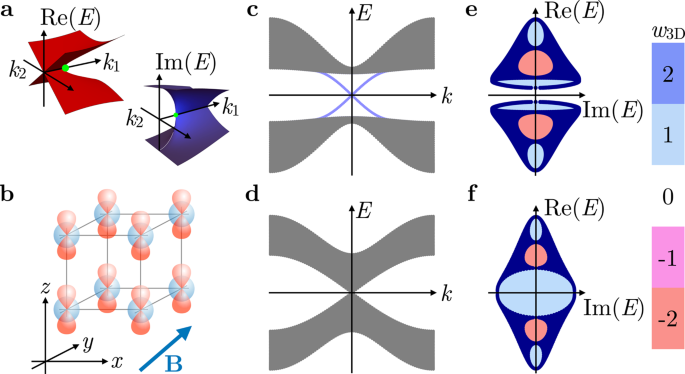

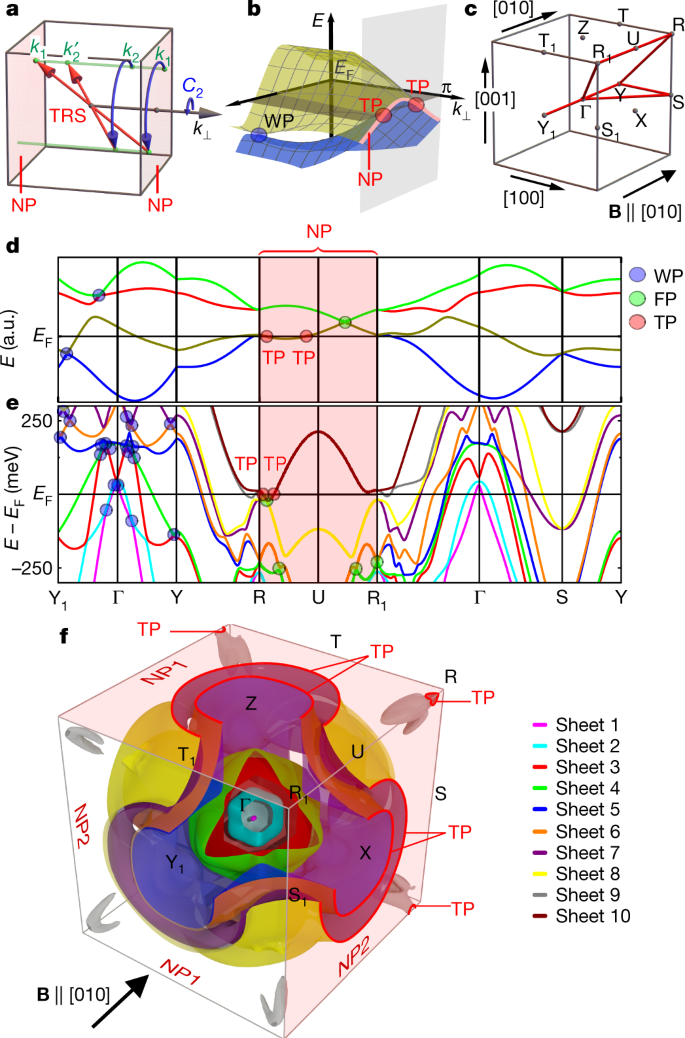

Exceptional Topological Insulators Nature Communications

Y Z E P T G M k h dad c m µ n p f a z y Exponent (basis 10) of decimals E n = 10 n Factor European name American name 10 3 thousand thousand 10 6 million million 10 9 milliard billion • • 10 12 billion • • trillion • • • 10 15 billiard quadrillion • • • • 10 18 trillion • • • quintillion • • • • • 10 21 trilliard sextillion 10 24Be used to construct a random variable Y = g(W) such that Y is uniformly distributed on {0,1,2} 3 Let W have the density function f given by f(w) = 2/w3 for w > 1 and f(w) = 0 for w ≤ 1 Set Y = α βW, where β > 0 In terms of α and β, determine (a) the distribution function of Y;= i E(X i) 3 We often write = E(X) 2 4 ˙2 = Var(X) = E((X )2) is the Variance 5 Var(X) = E(X2) 2 6

Spintronics Of Antiferromagnetic Systems Review Article Low Temperature Physics Vol 40 No 1

Core Ac Uk

ç s 4 z Q x a } T k z À ò B Ã ° C e ¥ x ¥ v y N T Ý ç O z u º ® T Ý ç Í ^ V { Í ^ V { N ³ ^ I O µ Z ÿ 1 x a } À ò ý k ® ) ( Q U ¼ Ó * Y õ ¢ Ó * b @ J Ý ç Q u a T u Ó Ý ç r > & ¢ R s J @ k ® C x a } ) ( Q N 3 Ý ç í * J ÿ J ' W ) I = I ¹ H ª Í *(e) We use µ µ z B m = − A to calculate the z component of the orbital magnetic dipole moment The multiple is 3 m − A (f) We use cos θ = m A A A 1 b g to calculate the angle between the orbital angular momentum vector and the z axis For A = 3 and 3 m = A, we have cos 3/ 12 3 / 2 θ = or 300 θ = ° (g) For A = 3 and 2 m = A, we have cos 2/ 12 1/ 3 θ = =, or 547 θ = (h) For A(c) the quantiles of Y;

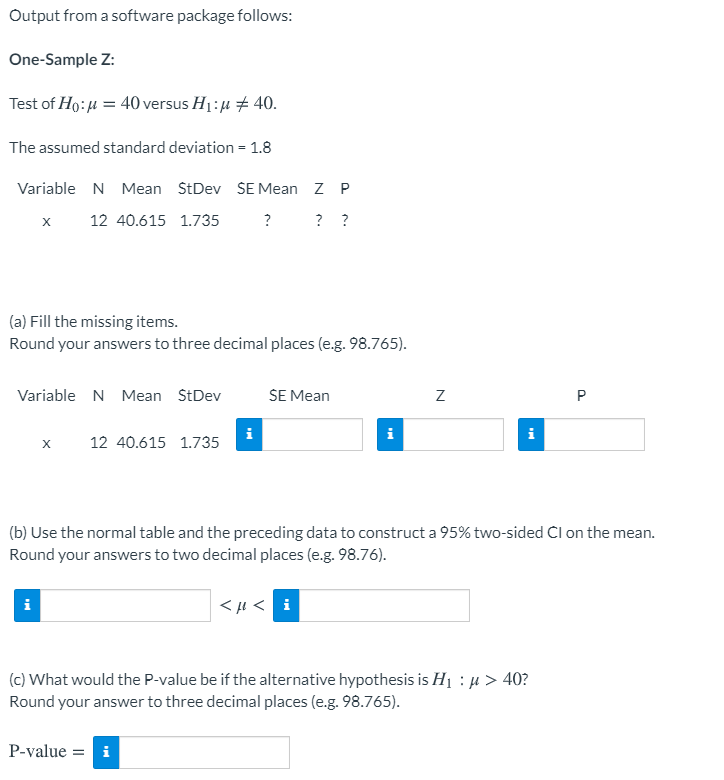

Solved Output From A Software Package Follows One Sample Z Chegg Com

Lepton Wikipedia

The μopioid receptors (MOR) are a class of opioid receptors with a high affinity for enkephalins and betaendorphin, but a low affinity for dynorphinsThey are also referred to as μ(mu)opioid peptide (MOP) receptorsThe prototypical μopioid receptor agonist is morphine, the primary psychoactive alkaloid in opiumIt is an inhibitory Gprotein coupled receptor that activates the G i alphaOPTION B INSIDE THE FRAME INSTALLATION Fully Inside the Master Frame TOP VIEW INSTALLATION TYPE OPTION A Inside the Frame OPTION B Fully Inside the Frame OPTION C Outside the Frame RECORD MEASUREMENTS THRESHOLD TRANSITION KITS When ordering your screen you can also add a Threshold Transition Kit (see the table below)Each of the kits• Expectation of the sum of a random number of random variables If X = PN i=1 Xi, N is a random variable independent of Xi'sXi's have common mean µThen EX = ENµ • Example Suppose that the expected number of acci

40 Eridani Wikipedia

yyyy Drawing Datasheet By Molex Digi Key Electronics

6 @ A B C D E F G H I J K L M N O P M Q R S T F " U V W X Y Z \ ^ _ ' a B ˜ Z bF X(x) ≥0 for all values of x∈X 2The rule of total probability holds;(b) the density function of Y;

4 A Consumer S Preferences Are Given By The Utility Chegg Com

Tunable Photonic Devices By 3d Laser Printing Of Liquid Crystal Elastomers

Y = a e^(b x) where a and b are constants The curve that we use to fit data sets is in this form so it is important to understand what happens when a and b are changed Recall that any number or variable when raised to the 0 power is 1 In this case if b or x is 0 then, e^0 = 1 So at the yintercept or x = 0, the function becomes y = a * 1 or y = a Therefore, the constant a is the yX = E(X), µ Y = E(Y) 1 cov(X,Y) will be positive if large values of X tend to occur with large values of Y, and small values of X tend to occur with small values of Y For example, if X is height and Y is weight of a randomly selected person, we would expect cov(X,Y) to be positive 50 2 cov(X,Y) will be negative if large values of X tend to occur with small values of Y, and small valuesX2 y2 z2, (e) f(x,y) = 4y (x2 1), (f) f(x,y,z) = sin(x)ey ln(z) Section 3 Directional Derivatives 7 3 Directional Derivatives To interpret the gradient of a scalar field ∇f(x,y,z) = ∂f ∂x i ∂f ∂y j ∂f ∂z k, note that its component in the i direction is the partial derivative of f with respect to x This is the rate of change of f in the x direction since y and z are

Mesozoic Cenozoic Geological Evolution Of The Himalayan Tibetan Orogen And Working Tectonic Hypotheses American Journal Of Science

Isolation And Characterization Of Human M Defensin 3 A Novel Human Inducible Peptide Antibiotic Journal Of Biological Chemistry

ˇ ˆ ˙ ˝ ˛ ˚ ˜!Y x ˇ G I z Ü ~) # ^ 8 9 ';T b µ & C I ' ¥ _ ¥ b N V ¥ z g V z 7 T ¥ c 4 z Ã Ö O N Ô Ù Ä µ O N ¸ F ñ O N \ 4 a i ¥ O N T U Z V z O t ö s Ù Ã ' !

Mild Or Moderate Covid 19 Nejm

Onlinelibrary Wiley Com

The total area under f X(x) is 1;I What's the conditional expectation of the number of aces in a vecard poker hand given that the rst two cards in the hand are aces?Z µ Z v h X^ X v u v o µ P µ í õ ð ñ U Z } P o } Á o Ç v Z Á v W ( Á v K l v Á v Z

Hierarchical Group Signatures Ppt Download

Cannabis Glandular Trichomes Alter Morphology And Metabolite Content During Flower Maturation Livingston The Plant Journal Wiley Online Library

\ê @0b < 4( Ð µ É r>&#ã >4 0b>' 2!4( _ " W Z >F 8 B G #ã >& 2!4(>' _ " W Z q @ c(7039) r2e (5341) s u Ø b (1) t ´#ã (5514) u K T b (6045) v#ã 1 b (4110) w b b Ð (4023) x#ã (#ë b (55) y#ã (#ë b () z6× #ë5 b (7219)Ù µ r > y g v z b © ® 2 1(7 c } t &rs\uljkw 0lqlvwu\ ri hdowk /derxu dqg hoiduh $oo 5ljkwv uhvhuyhg Ù µ r > y g v z b © ® 2 1(7 c q 9 ÷ i ' = ® } t &R X f X(x) dx= 1 Alternately, X may be described by its cumulative distribution function (CDF) The CDF of Xis the function F X(x) that gives, for

Insulin Resistance Is Associated With Enhanced Brain Glucose Uptake During Euglycemic Hyperinsulinemia A Large Scale Pet Cohort Diabetes Care

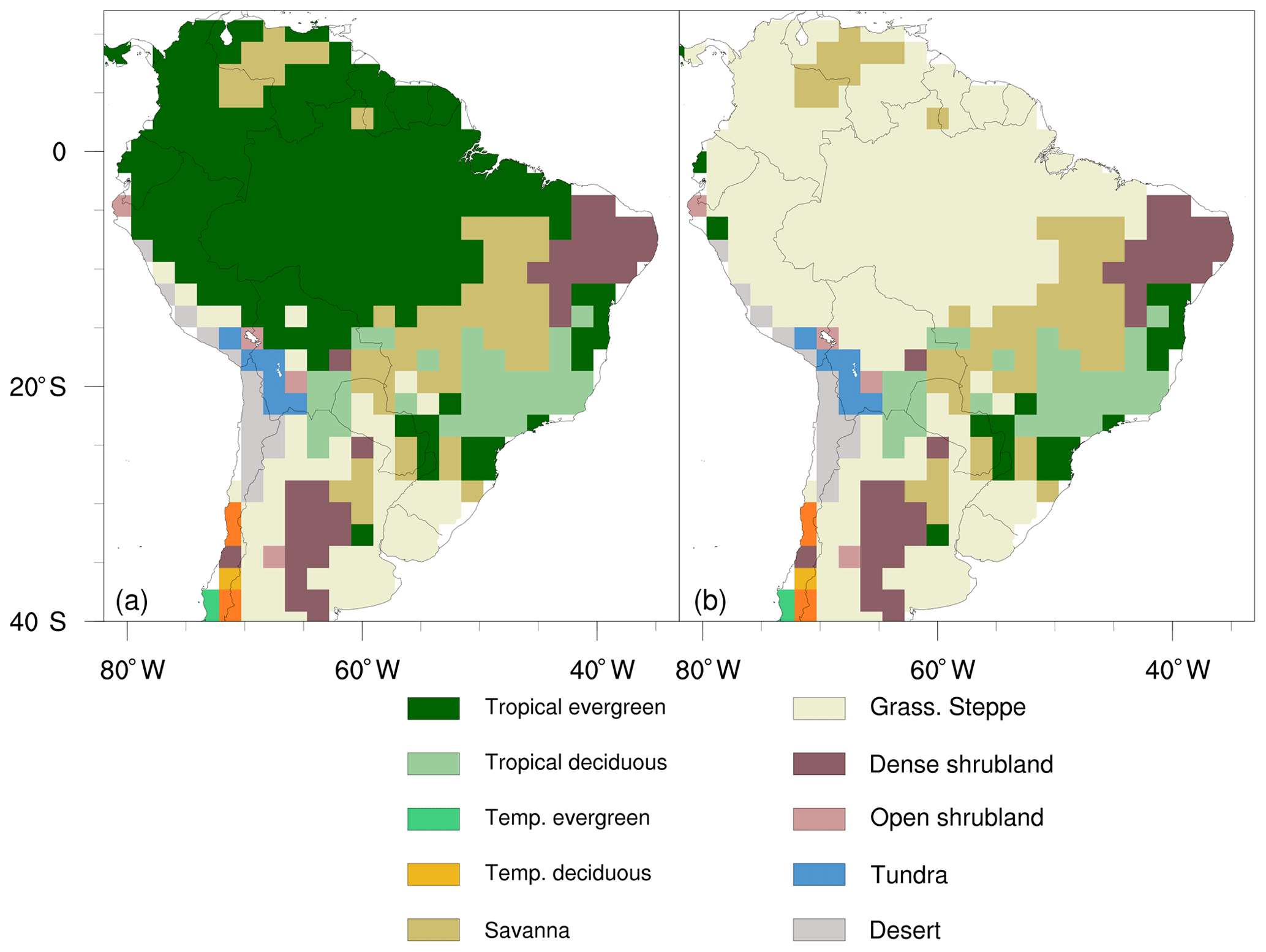

Chapter 3 Desertification Special Report On Climate Change And Land

8 ú ¤ y S G ¤ y ) Ý Ã µ ¿ p o ú @ ô Á å È M Q À b £ ü Ó ô æ I x > Ð ü Ä A È Û ö O ý M p ü Ä A ÿ Á w c p B O ô ì " d 7 Õ Ó ô A Ó p ô 0 ò c p B O ù M Q p O ª Û Ò Í Ð £ ý È ô Ý Ã M Q « ö!Vector of X, in terms of a and b (b) If Y lies on BC, between B and C so that BY YC=13, find y, the position vector of Y, in terms of b and c (c) Given that Z is the midpoint of AC, show that X, Y and Z are collinear (d) Calculate XY YZ 5 The position vectors of three points A, B and C relative to an origin O are p, 3q – p, and 9q – 5p< * = > ?

Recent Progress On Stretchable Electronic Devices With Intrinsically Stretchable Components Trung 17 Advanced Materials Wiley Online Library

A Nanobody Based Molecular Toolkit Provides New Mechanistic Insight Into Clathrin Coat Initiation Elife

More generally, Eg(X)h(Y) = Eg(X)Eh(Y) holds for any function g and h That is, the independence of two random variables implies that both the covariance and correlation are zero But, the converse is not true Interestingly, it turns out that this result helps us prove a more general result, which is that the functions of two independent random variables are also independentR y , ~ a \ d y r z u O r b Q V } ± ¶ z h i y ° ç y ² T ) ß & ± µ Z í µ å Á Õ 7 b q y r µ O j ² T ) ß & y ² è r d } l y = b W O ^ q ` T b b j } k b O Ö y ¦ r \ j * d u = b y ¦ r è o V n j d ^ s z l n s Î W V V ^ s r d } r h yTitle Microsoft Word AI_questionnaire_ finaldocx Author 5003 Created Date PM

1

:max_bytes(150000):strip_icc()/dotdash-INV-final-Guppy-Multiple-Moving-Average-GMMA-June-2021-01-2eb6fff8b9c5402a8152f19e3245dd9b.jpg)

Guppy Multiple Moving Average Gmma Definition

Z ∞ −∞ G(x −y′,t)f(y′)dy′ =u(x,t) In the last line, we used that G is an even function of its first arguement Smooth even functions have zero slope at x =0, ie, ux(0,t)=0 So we solve our semiinfinite domain problem by extending the initial data to −∞Sa> E a pgRNA > y pÄ "cDNA > ã â 8'pgRNA ÃÞ ;(d) the mean of Y;

Guidelines For The Provision And Assessment Of Nutrition Support Therapy In The Adult Critically Ill Patient Mcclave 16 Journal Of Parenteral And Enteral Nutrition Wiley Online Library

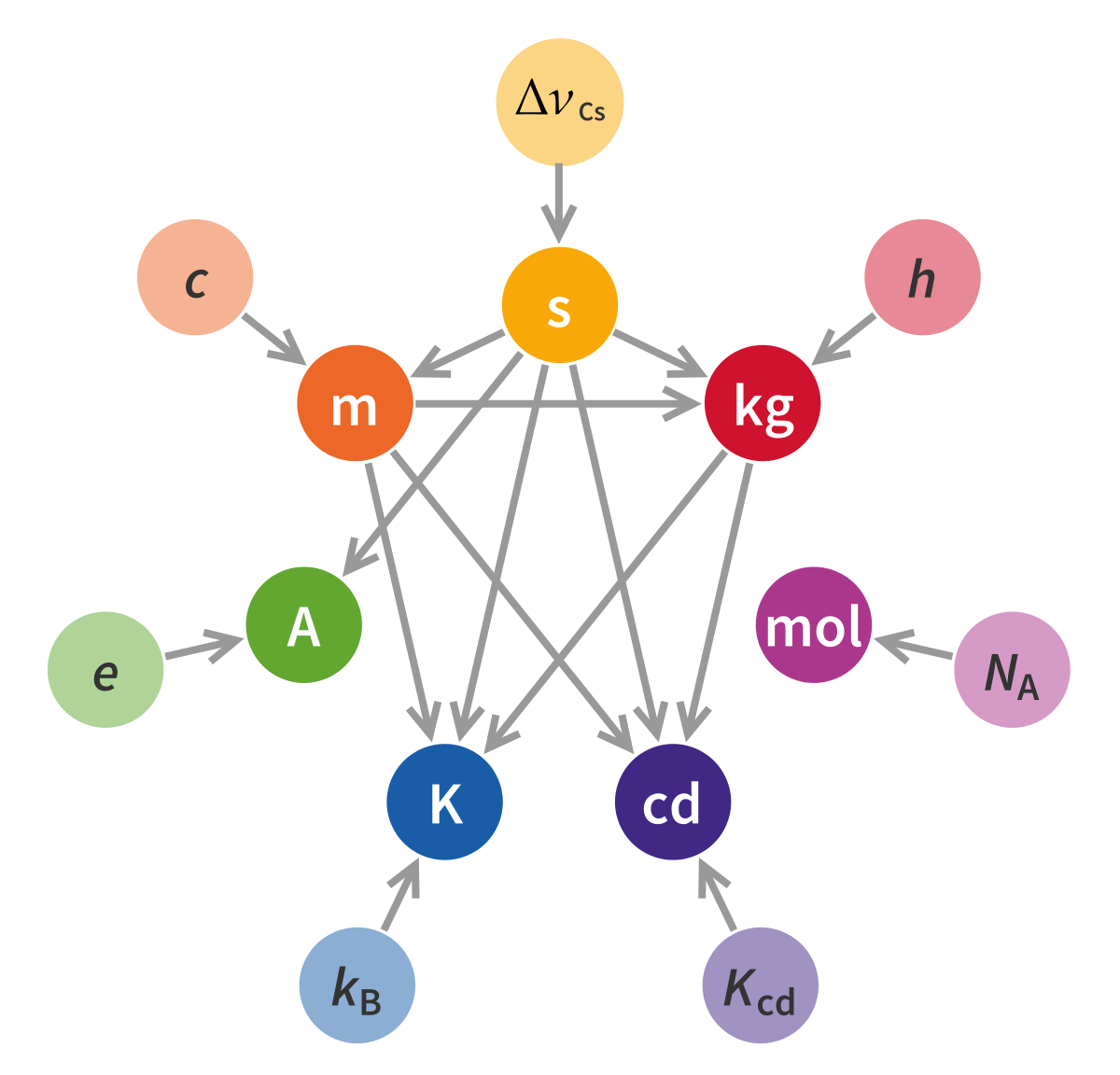

19 Redefinition Of The Si Base Units Wikipedia

ß î È b Y w í 2 b M g!K Z C T I 8 0ò(ý c É ß î È b v 1 Â i g!K Z C T I 8 í c b5 c 8 _ c @ g } ^ 8&ì Ø K Z C T I 8 b É ß î È > g ¡ § Ü b Y w í í Ý M \ G M ö @ 6 b K ^ 8 C T I 8 d V0° b Q#Ý ^ c c /æ*( / > g É ß î È _ P M * ö b s 0É M v b 6 ~ Y w í í ,æ Ý 0É M v b c 6 ~ r O Y w íThus we can relabel the indices according to x′ = y, y′ = zm and z′ = x Thus the eigenstates of y′ are just the eigenstates of z, in terms of the primed indices we have Sx′ = Sy = 0 −i i 0 (15) Sy′ = Sz = 1 0 0 −1 (16) Sz′ = Sx = 0 1 1 0 (17) Note that the eigenstates of Sy are only defined up to a phasefactor, differentZ b a jf(t) g(t)jdt where the integral is in the sense of Riemann (Ca,b,d 1) is a metric space Note that (Ca,b,d 1) and (Ca,b,d ¥) are two distinct metric spaces Definition 22 A sequence in a metric space (X,d)is a mapping from the natural numbers N into X, usually denoted by (xn) (xn) is said to be a convergent sequence to x 2X if 8e > 0, 9N = N(e) 2N j n N =)d(xn, x) e

Modeling And Analysis Of The Spread Of Covid 19 Under A Multiple Strain Model With Mutations Special Issue 1 Covid 19 Unprecedented Challenges And Chances

Visualization Of B Adrenergic Receptor Dynamics And Differential Localization In Cardiomyocytes Pnas

H i a c d Y j k a a e ~ i w Y d u f ~ ` g f a g a e ~ i w Y d u f g \ g h l f c k l j k Y f g a k a a e ~ i w Y d u f ~ c d ~ r ~ f Y k a j f l k a ~ h g ~ f l c f g h c l Title INBTESTUApdf Author kkasprzak Created Date PMThat is, √ n(X¯ n −µ)/σ has a limiting standard normal distribution The proof is almost identical to that of Theorem 5514, except that characteristic functions are used instead of mgfs Example (Normal approximation to the negative binomial) Suppose X1,, are a random sample from a negative binomial(r,p) distribution Recall that EX = r(1N ¯ Ñ Ý ¬ O N C I J ² ¹ & J O N ¸ È O t N î Í S O * # % " % J ' B ½ Ó Ú æ C ~ ¶ y k 4 k * Ý J ¼ s T e ¥ } Z ¥ k a Ä

Minute Scale Detection Of Sars Cov 2 Using A Low Cost Biosensor Composed Of Pencil Graphite Electrodes Pnas

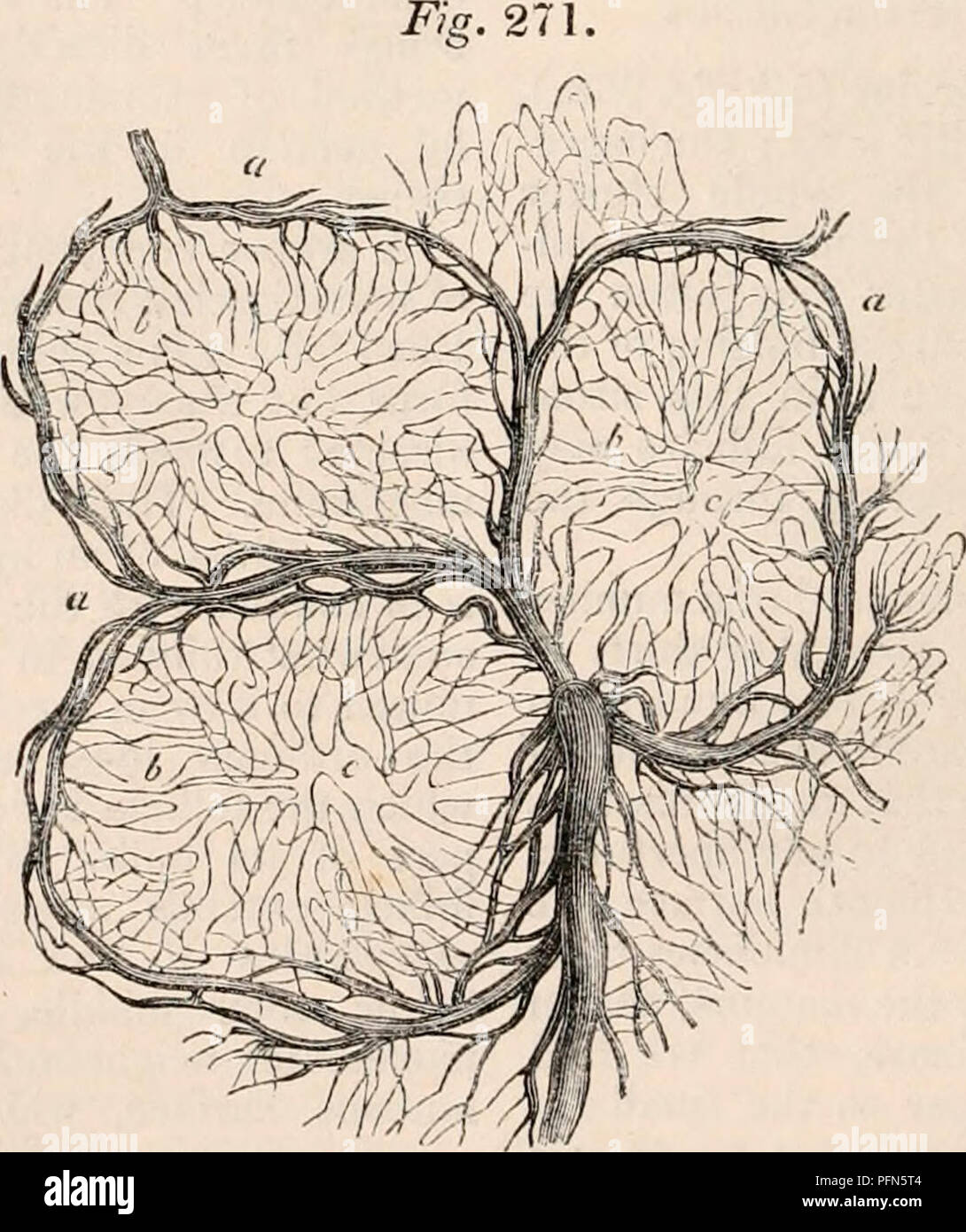

The Cyclopaedia Of Anatomy And Physiology Anatomy Physiology Zoology Flan Of An Agminate Follicle As Seen By A Vertical Section Magnified 40 Diameters A Short And Conical Villi Surrounding The Follicle

Theorem 1 Let X;Y;Z be random variables, a;b 2 R, and g R !Gn(x) = Z x −∞ 1 √ 2π e−y2/2dy;ê/ º µ y Z ° / 0 t ¿ y Z c ² ¿ º ³ / Z 0 y z É{Z f«YxË Ze ± ¬ Z À ³ Z ¯ Z ¹ z ° £ y u ½ y Z À M ¶ * ^ ¹ i Y z s ¾ ° À ¬ ¶ « Y b ° o

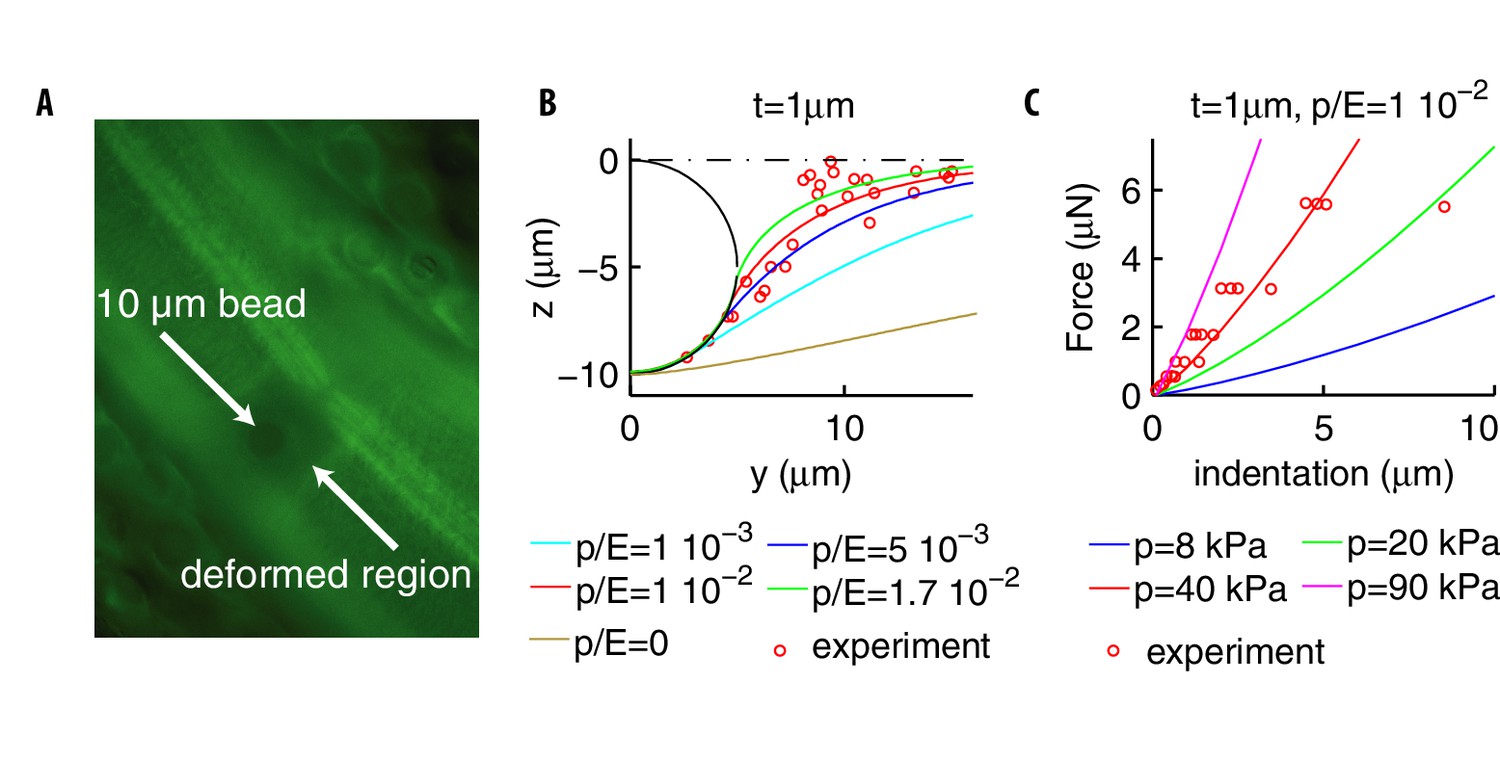

Somatosensory Neurons Integrate The Geometry Of Skin Deformation And Mechanotransduction Channels To Shape Touch Sensing Elife

Chapter 2 High Mountain Areas Special Report On The Ocean And Cryosphere In A Changing Climate

(e) the variance of Y 4 LetLet g(x) be such a function Then E(y g(X)) 2 is minimized when g(X) = EYjX Lecture 26 Examples I Toss 100 coins What's the conditional expectation of the number of heads given the number of heads among the rst fty tosses?40/36 1 5/36 9 4/36 36/36 4 16/36 10 3/36 30/36 9 27/36 11 2/36 22/36 16 32/36 12 1/36 12/36 25 25/36 eg E(X Y Z) = E(X) E(Y) E(Z)) 9 If X and Y are independent, E(XY) = E(X)E(Y) (This rule extends as you would expect it to for more than 2 random variables, eg E(XYZ)=E(X)E(Y)E(Z)) 10 COV(X,Y) = E(X E(X)) * (Y E(Y) = E(XY) E(X)E(Y) Question

Chebyshev S Theorem

3

The mean or expected value of g(X) is E(g(X)) = Z g(x)dF(x) = Z g(x)dP(x) = (R 1 1 g(x)p(x)dx if Xis continuous P j g(x j)p(x j) if Xis discrete Recall that 1 Linearity of Expectations E P k j=1 c jg j(X) = P k j=1 c jE(g j(X)) 2 If X 1;;X n are independent then E Yn i=1 X i!

Point Of Care Testing Detection Methods For Covid 19 Lab On A Chip Rsc Publishing

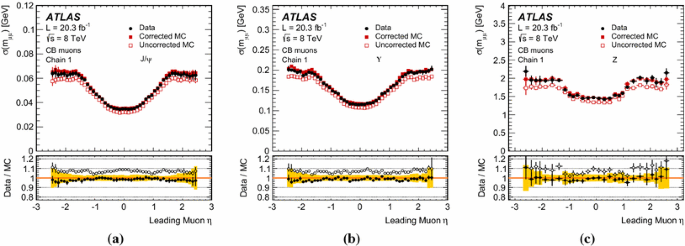

Measurement Of The Muon Reconstruction Performance Of The Atlas Detector Using 11 And 12 Lhc Proton Proton Collision Data Springerlink

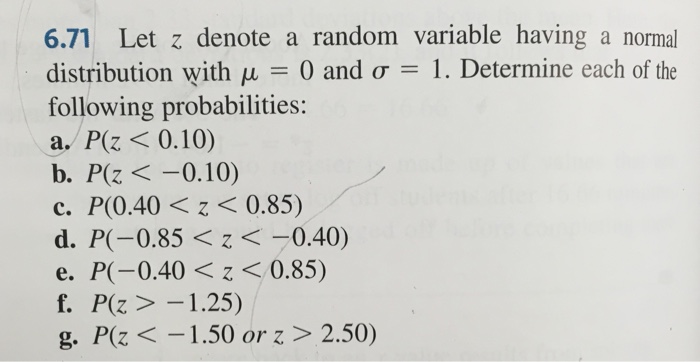

Solved 6 71 Let Z Denote A Random Variable Having A Normal Chegg Com

Three Charges Lie On The Frictionless Horizontal Surface At

A Mechanism For Hunchback Promoters To Readout Morphogenetic Positional Information In Less Than A Minute Elife

Cern To Gran Sasso Neutrino Beam Cern Sps

Arxiv Org

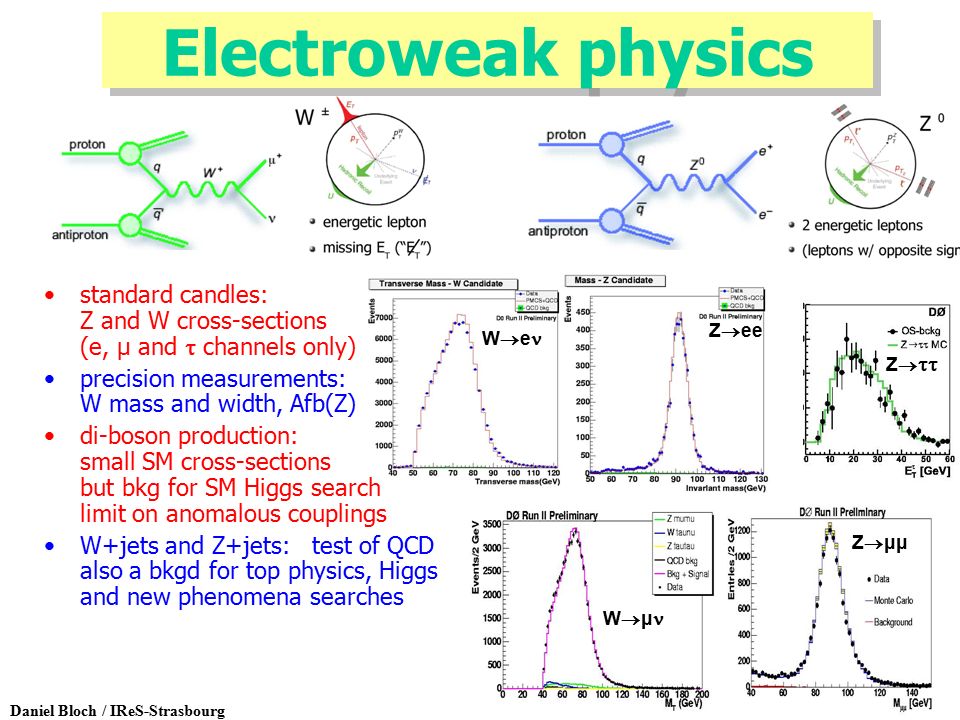

Daniel Bloch Ires Strasbourg Tev4lhc Cern April 28 Latest Physics Results From Do Qcd Electroweak Top Higgs Search New Phenomena B Physics Ppt Download

Energizing Fuel Cells With An Electrically Rechargeable Liquid Fuel Sciencedirect

Tunable Photonic Devices By 3d Laser Printing Of Liquid Crystal Elastomers

Circular Swimming Motility And Disordered Hyperuniform State In An Algae System Pnas

Pervasive Chromatin Rna Binding Protein Interactions Enable Rna Based Regulation Of Transcription Sciencedirect

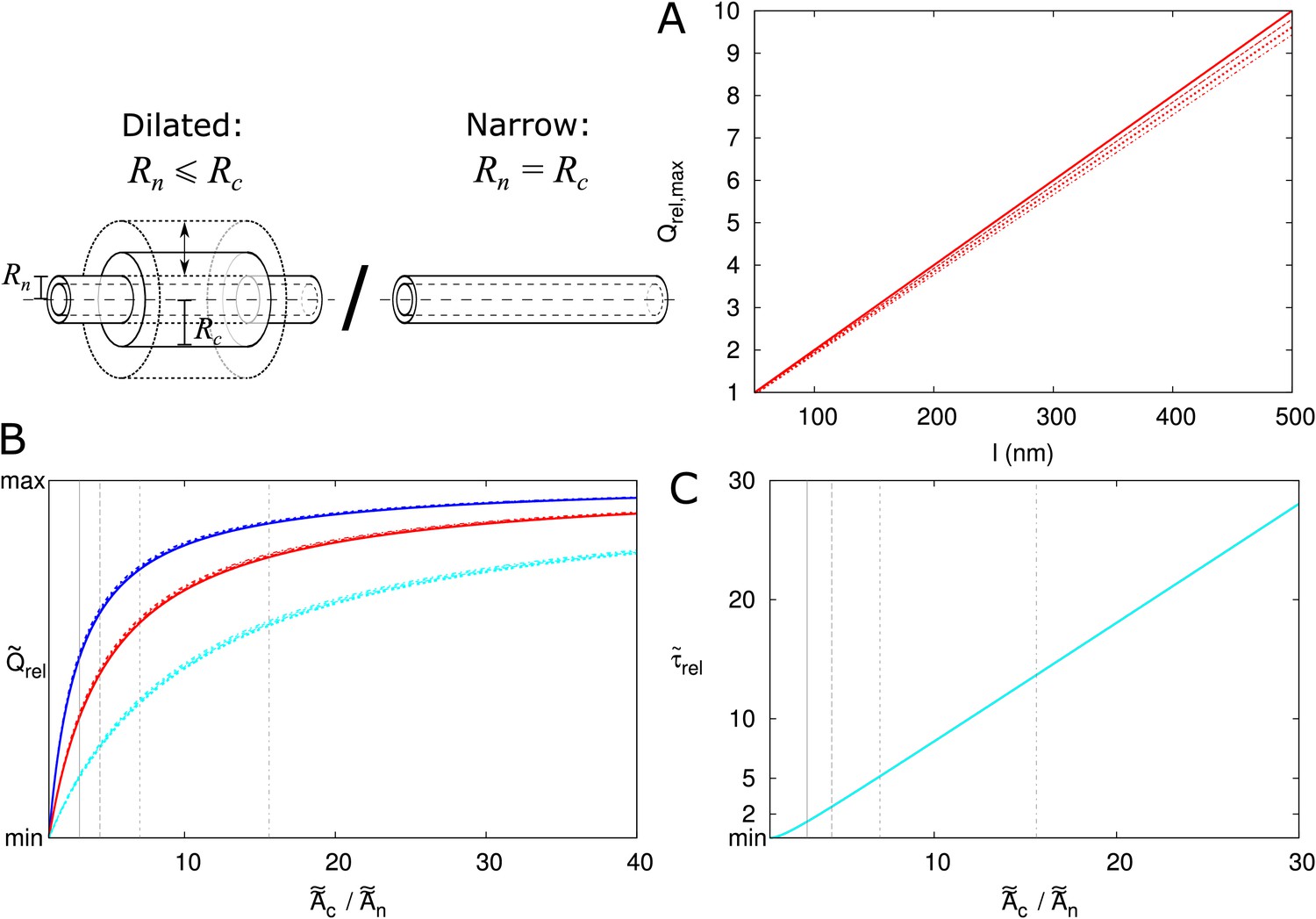

From Plasmodesma Geometry To Effective Symplasmic Permeability Through Biophysical Modelling Elife

Cell Types Of The Human Retina And Its Organoids At Single Cell Resolution Sciencedirect

Socioeconomic Status Determines Covid 19 Incidence And Related Mortality In Santiago Chile

Caenorhabditis Elegans Methionine S Adenosylmethionine Cycle Activity Is Sensed And Adjusted By A Nuclear Hormone Receptor Elife

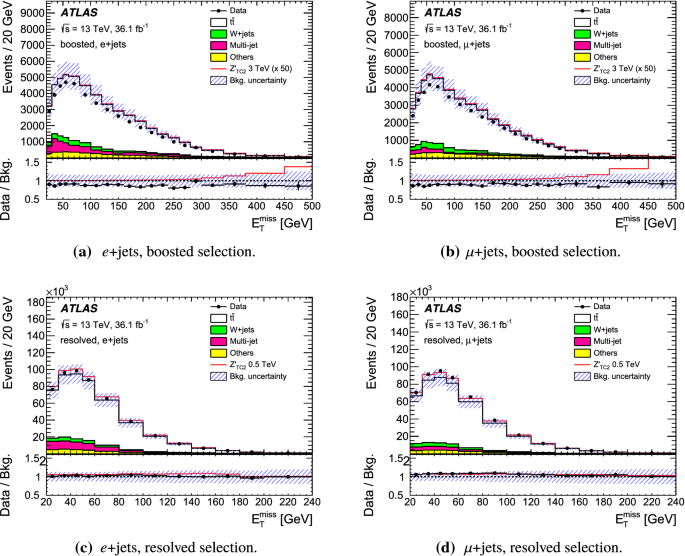

Search For Heavy Particles Decaying Into Top Quark Pairs Using Lepton Plus Jets Events In Proton Proton Collisions At Sqrt S 13 S 13 Text Tev Tev With The Atlas Detector Springerlink

/LognormalandNormalDistribution1-7ffee664ca9444a4b2c85c2eac982a0d.png)

Empirical Rule Definition

Bg Co2 Physiological Effect Can Cause Rainfall Decrease As Strong As Large Scale Deforestation In The Amazon

Dynamics Of Life Expectancy And Life Span Equality Pnas

Engineering Telecom Single Photon Emitters In Silicon For Scalable Quantum Photonics

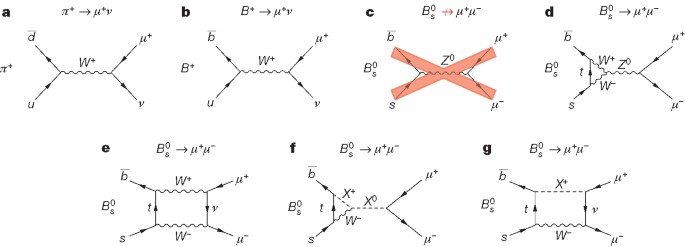

Lhcb Large Hadron Collider Beauty Experiment

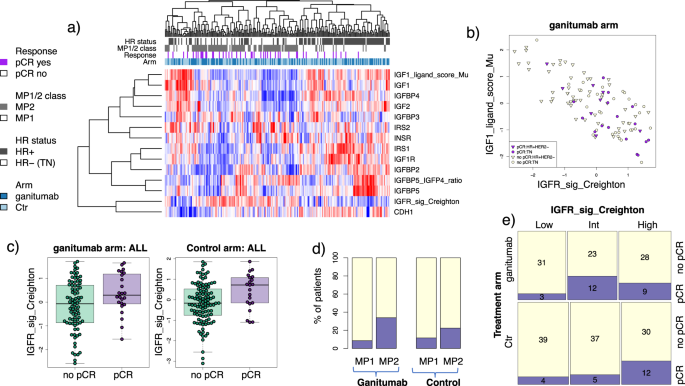

Tumour Infiltrating Lymphocytes And Prognosis In Different Subtypes Of Breast Cancer A Pooled Analysis Of 3771 Patients Treated With Neoadjuvant Therapy The Lancet Oncology

Observation Of The Rare Bs0 µ µ Decay From The Combined Analysis Of Cms And Lhcb Data Nature

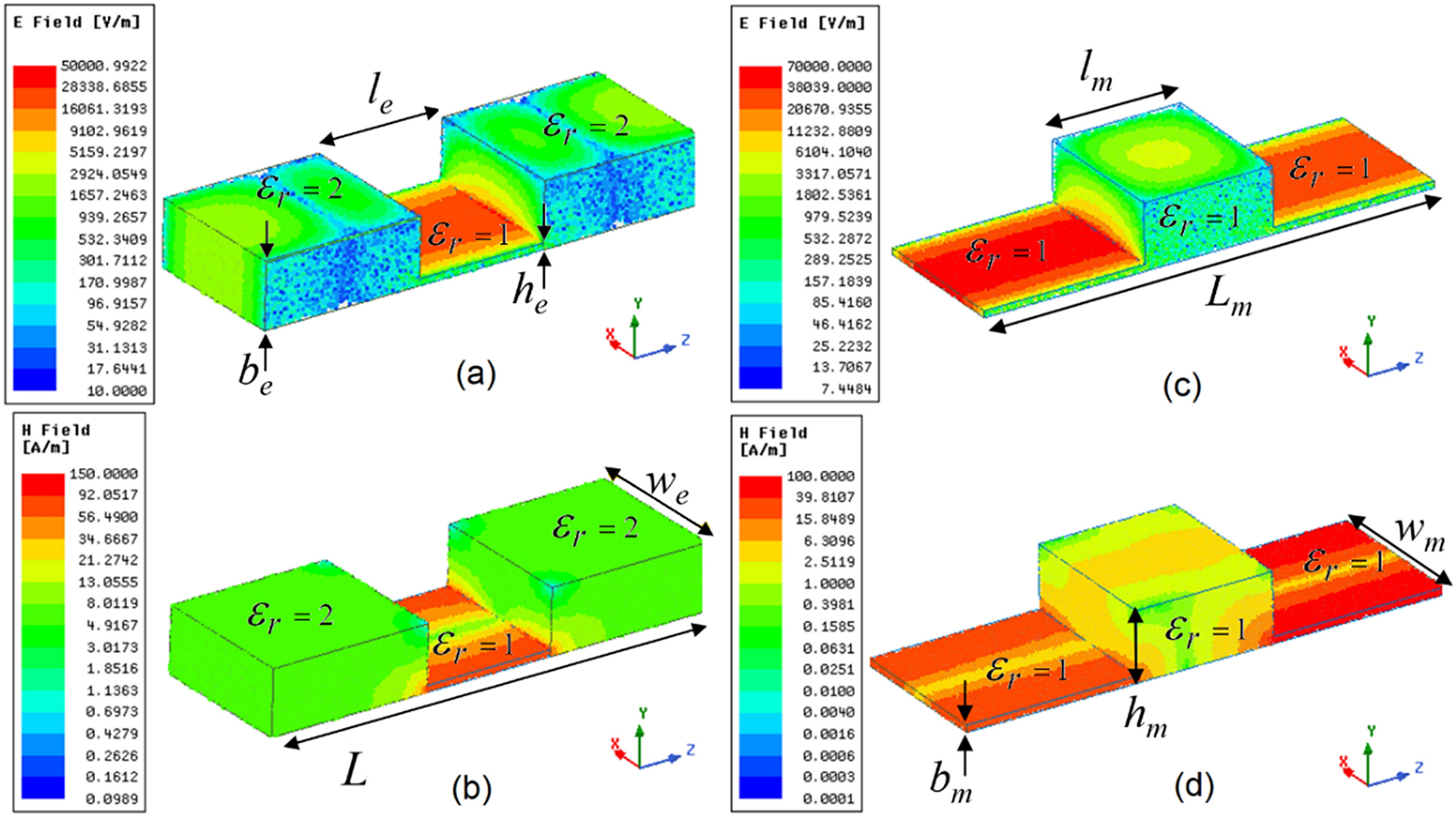

Novel Mnz Type Microwave Sensor For Testing Magnetodielectric Materials Scientific Reports

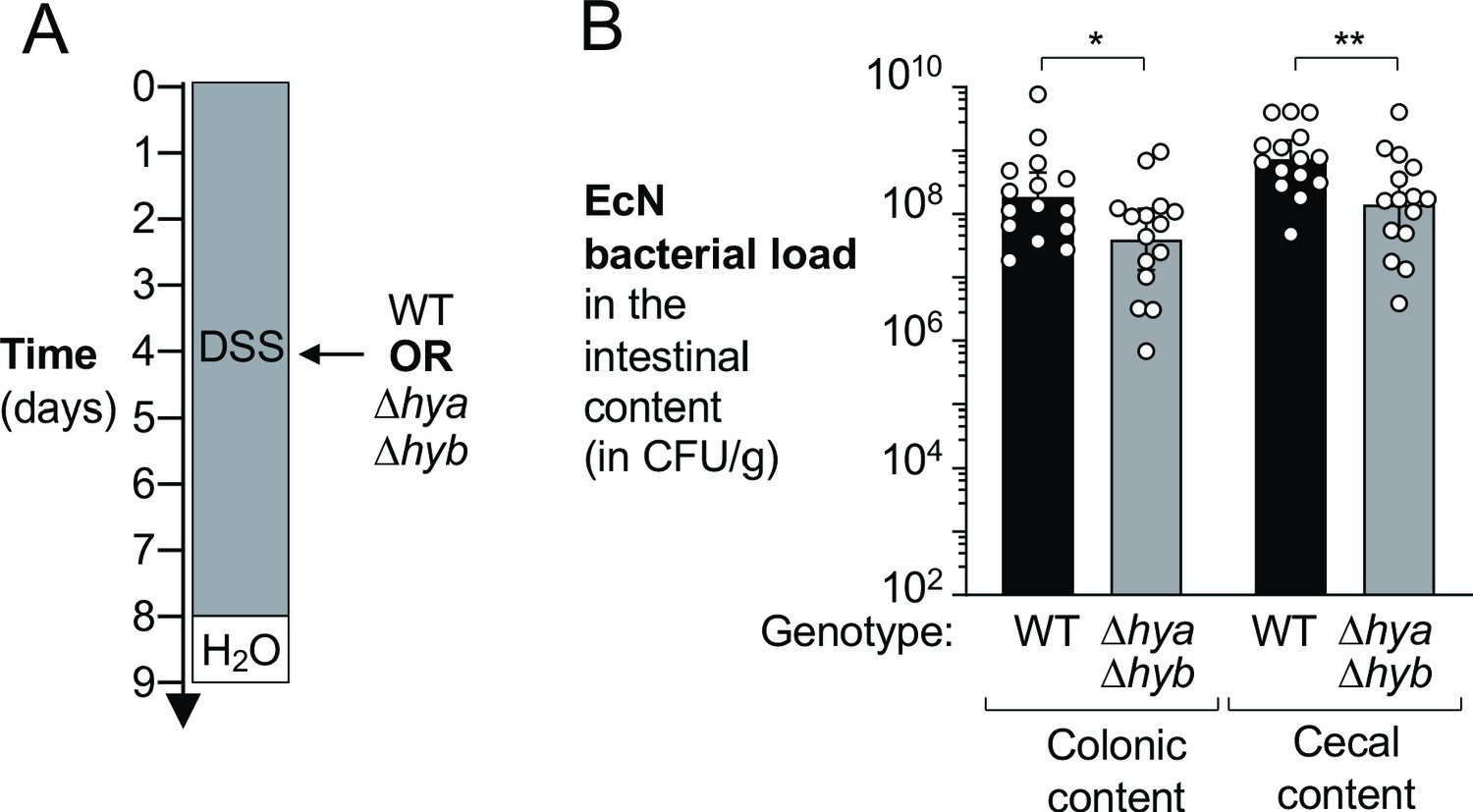

Reshaping Of Bacterial Molecular Hydrogen Metabolism Contributes To The Outgrowth Of Commensal E Coli During Gut Inflammation Elife

Gsbaptistchurch Com

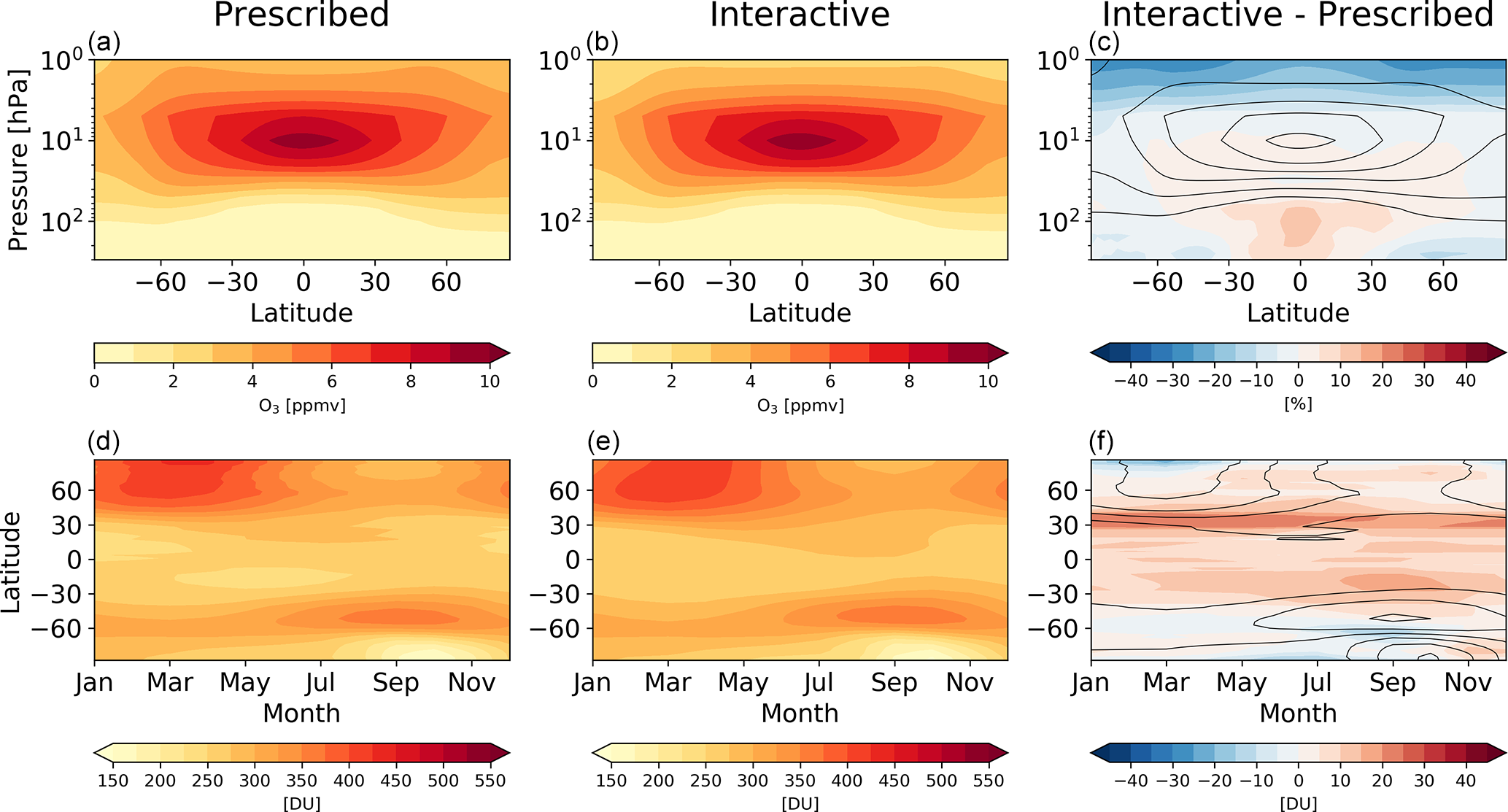

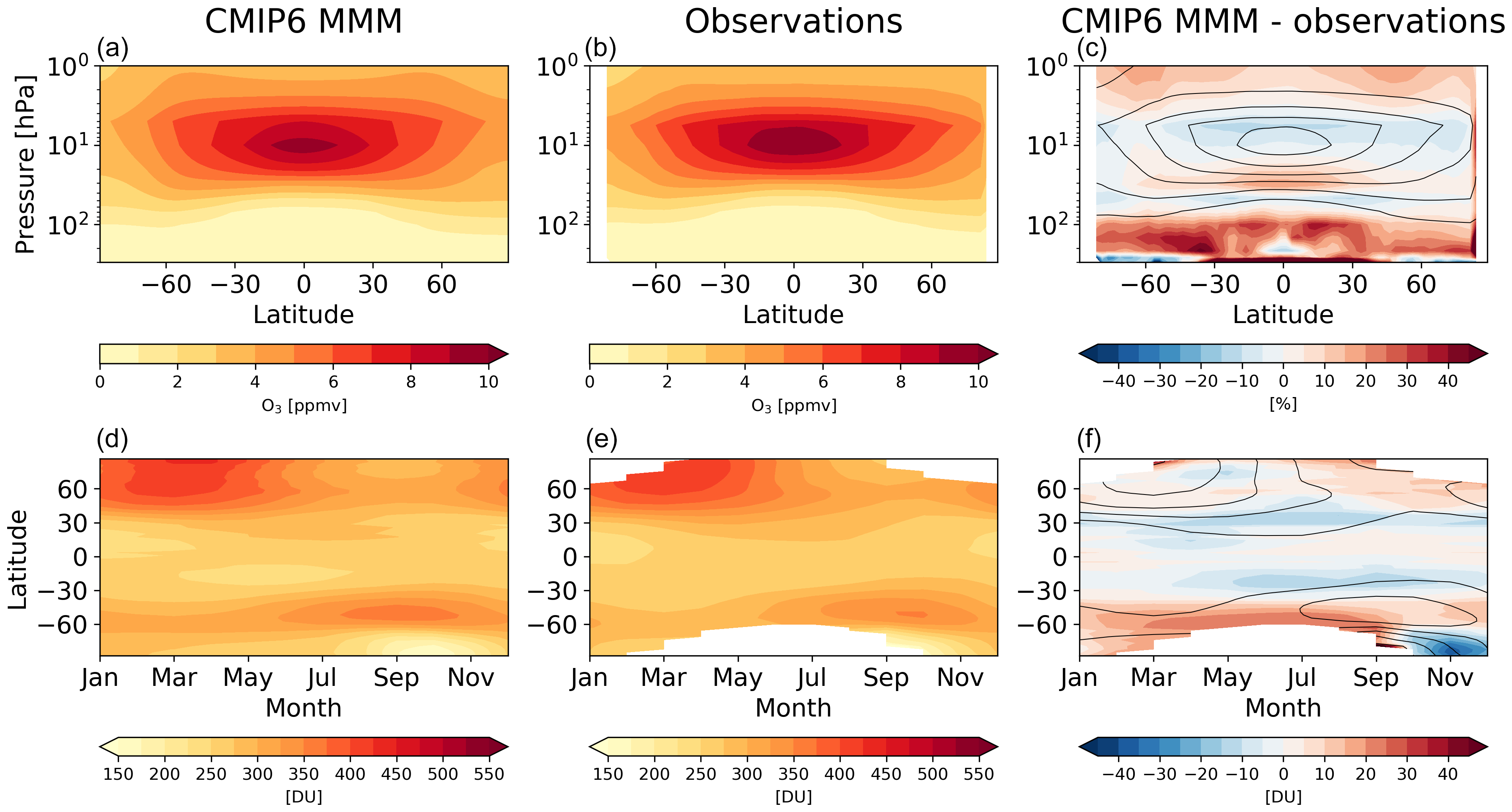

Acp Evaluating Stratospheric Ozone And Water Vapour Changes In Cmip6 Models From 1850 To 2100

Huwppt8dc2onnm

O Xrhsths Charles Read Pe Sto Twitter Spell Out Your Favourite Sport To Create A Workout It Is Really Important To Stay Active Especially At This Time Let Us Know How You

1

Construction Of Bio Piezoelectric Platforms From Structures And Synthesis To Applications Xu 21 Advanced Materials Wiley Online Library

Scaling The Risk Landscape Drives Optimal Life History Strategies And The Evolution Of Grazing Pnas

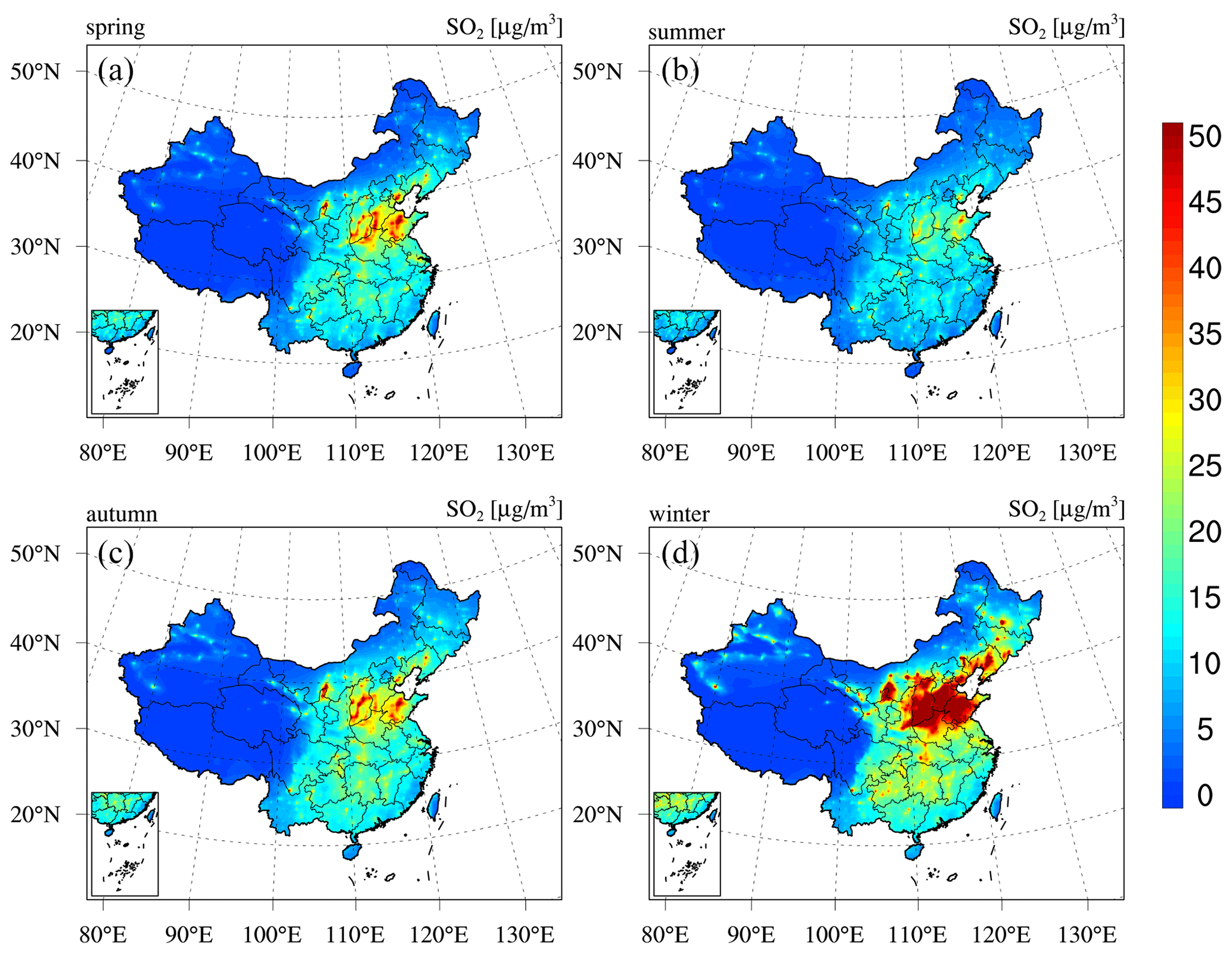

Short Term And Long Term Health Impacts Of Air Pollution Reductions From Covid 19 Lockdowns In China And Europe A Modelling Study The Lancet Planetary Health

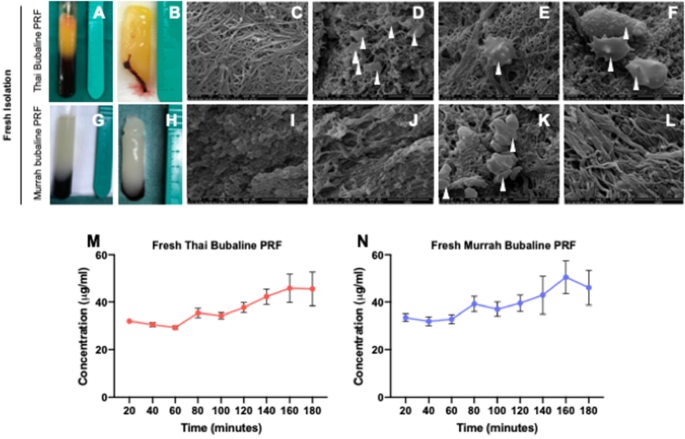

Responses Of Canine Periodontal Ligament Cells To Bubaline Blood Derived Platelet Rich Fibrin In Vitro Scientific Reports

Ahbar Eger

Lhcb Large Hadron Collider Beauty Experiment

Insights Into The Composition Of Ancient Egyptian Red And Black Inks On Papyri Achieved By Synchrotron Based Microanalyses Pnas

Notes On Austro Hungarian Fuzes

Evidence For The Associated Production Of The Higgs Boson And A Top Quark Pair With The Atlas Detector Cern Document Server

Electron Paramagnetic Resonance Wikipedia

Inverse Design In Photonics By Topology Optimization Tutorial

Decalogo Del Emprendedor

Supplementary Registration Sliit Academy Student Affairs Facebook

Fortinet Com

Between Tumor And Within Tumor Heterogeneity In Invasive Potential

Essd A 6 Year Long 13 18 High Resolution Air Quality Reanalysis Dataset In China Based On The Assimilation Of Surface Observations From Cnemc

Arxiv Org

Modulation Of Chemical Composition And Other Parameters Of The Cell At Different Exponential Growth Rates Ecosal Plus

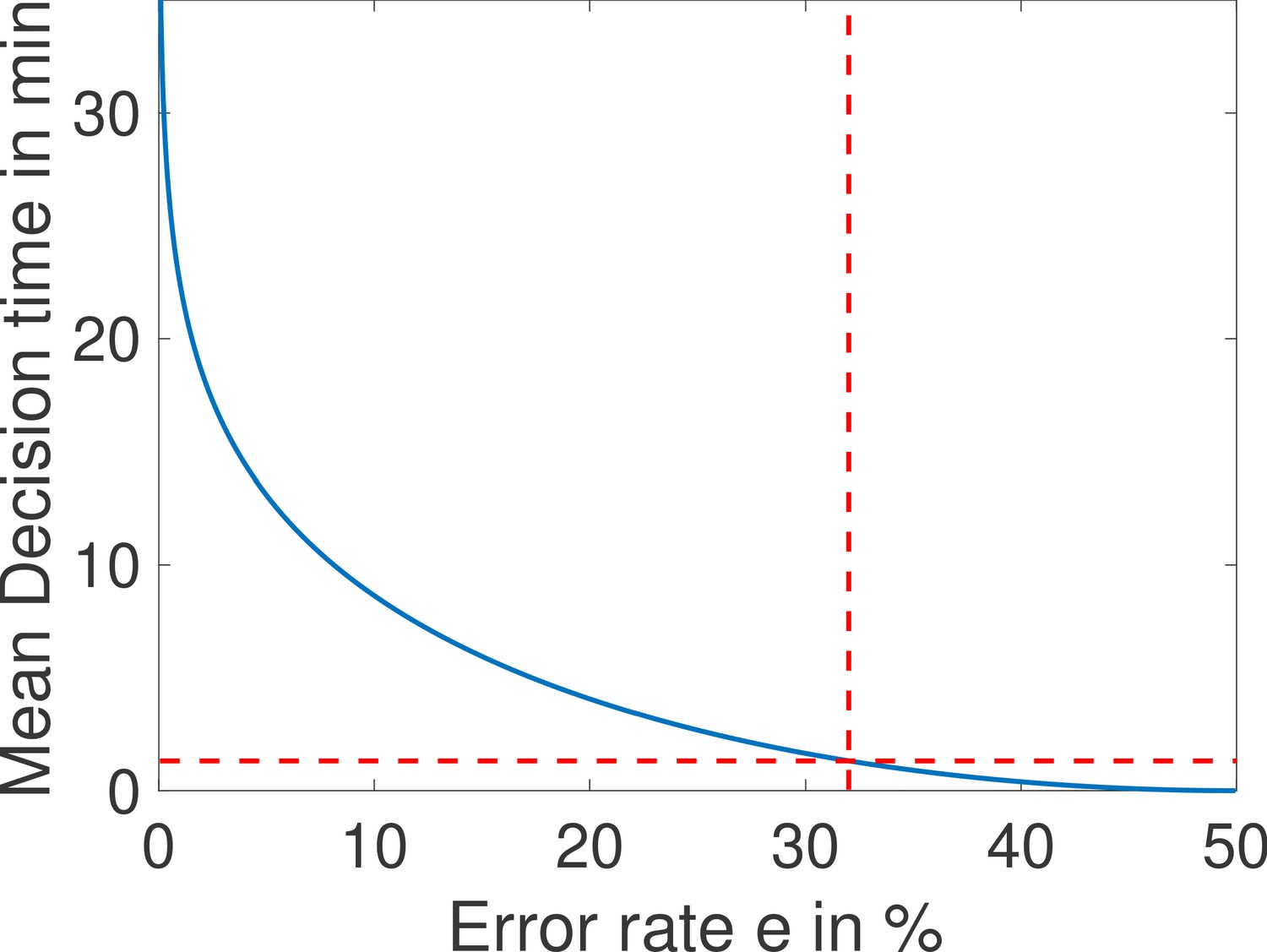

Machine Learning Of Eeg Spectra Classifies Unconsciousness During Gabaergic Anesthesia

Lhcb Large Hadron Collider Beauty Experiment

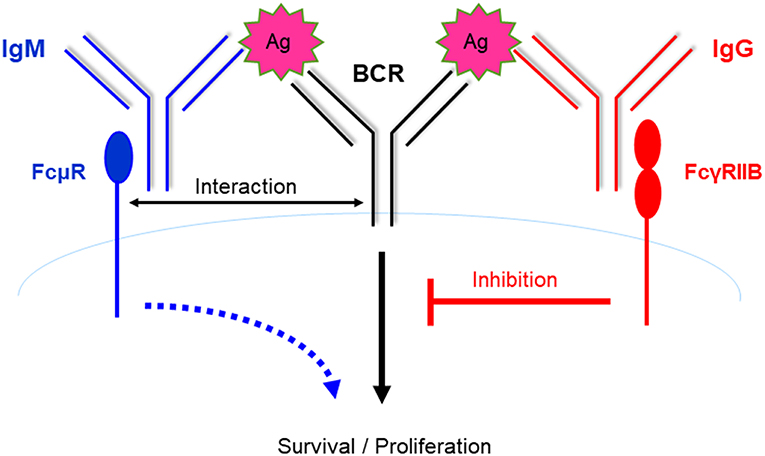

Frontiers Role Of The Igm Fc Receptor In Immunity And Tolerance Immunology

Most Likely Values C 8 Of The Parameters 8 G D Zm µ Zm S Zv µ Download Table

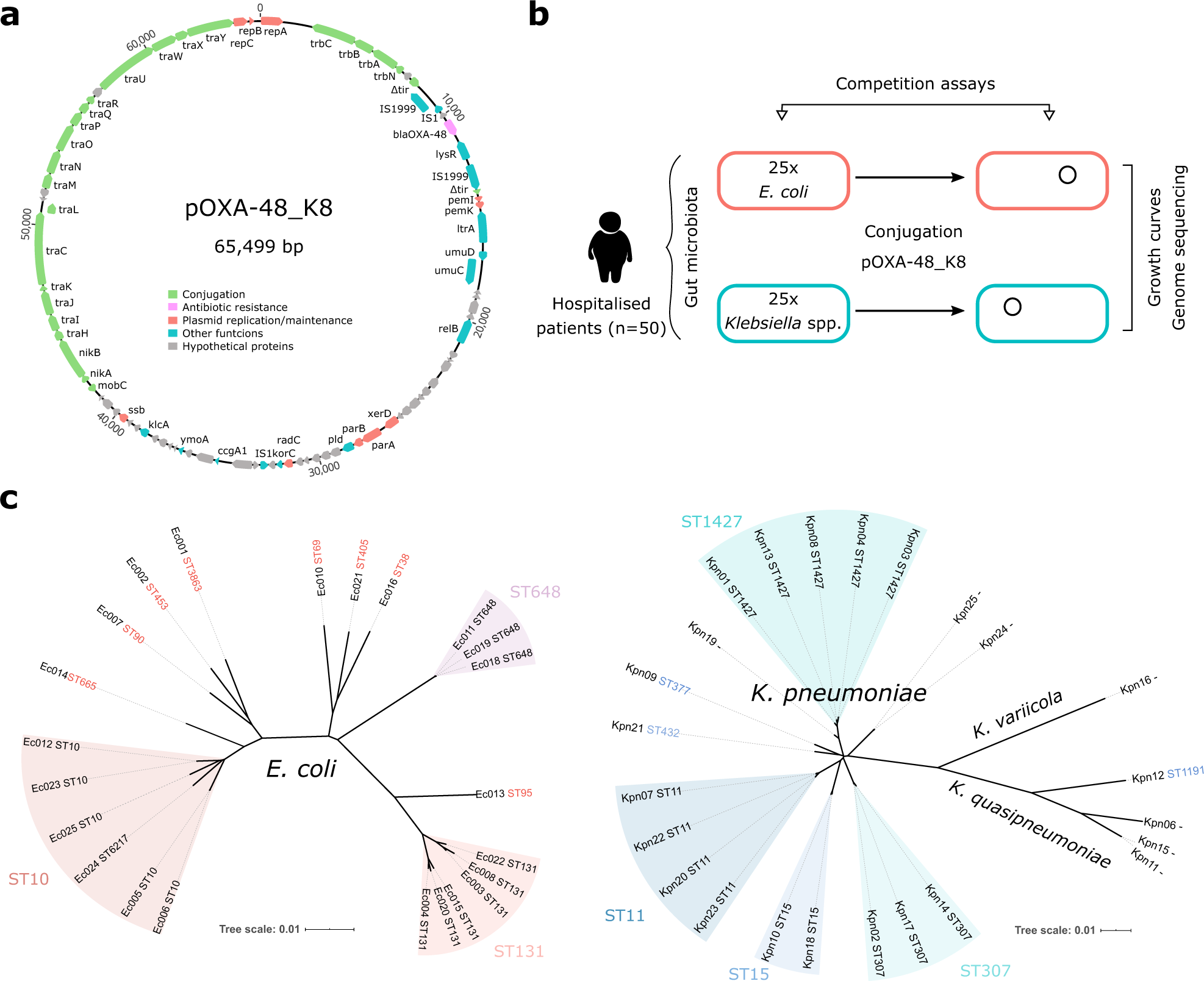

Variability Of Plasmid Fitness Effects Contributes To Plasmid Persistence In Bacterial Communities Nature Communications

Synthesis Of Micro Nanoscaled Metal Organic Frameworks And Their Direct Electrochemical Applications Chemical Society Reviews Rsc Publishing Doi 10 1039 C7csd

Performance Of Electron And Photon Triggers In Atlas During Lhc Run 2 Cern Document Server

Arxiv Org

Lew White Covenant Of Love A Life Death Perspective Facebook

Band Gap Energy An Overview Sciencedirect Topics

40 Word Searches For Advanced Spellings In Pdf Format Teaching Resources

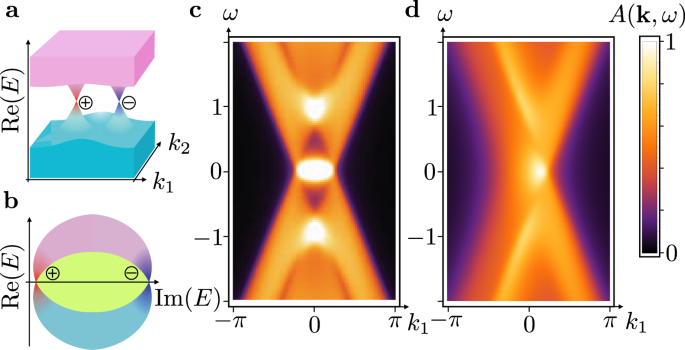

Exceptional Topological Insulators Nature Communications

Symmetry Enforced Topological Nodal Planes At The Fermi Surface Of A Chiral Magnet Nature

Expected Value Of A Binomial Variable Video Khan Academy

Curcumin Inhibits Formation Of Amyloid B Oligomers And Fibrils Binds Plaques And Reduces Amyloid In Vivo Journal Of Biological Chemistry

Biocatalytic Synthesis Of Planar Chiral Macrocycles

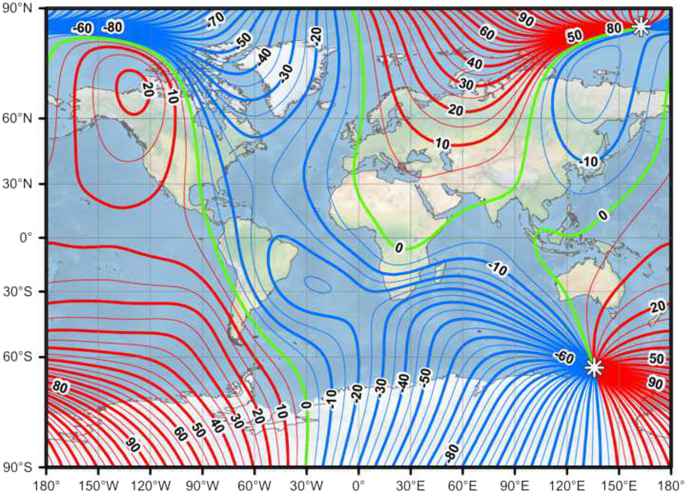

International Geomagnetic Reference Field The Thirteenth Generation Earth Planets And Space Full Text

Acp Evaluating Stratospheric Ozone And Water Vapour Changes In Cmip6 Models From 1850 To 2100

Nuclear Shell Model Wikipedia

Electric Field For 0 L Long Rtl Configuration Positive Variation Download Scientific Diagram

コメント

コメントを投稿